Deception has been around since the dawn of time. One of the first instances of questioning reality, or what is real, can be found in Genesis, where the serpent asked Eve, “Hath God Said??….”1 Without a sound grounding, with no absolutes, nothing can be certain. We live in a reality in which each of us has a unique perspective of that reality, but these perspectives can be distorted or manipulated in an infinite number of ways.

I’m not talking about subjective reality, where two people can look at the same flower, with one person saying it’s beautiful, and the other, ugly. But relative reality, where your perception of truth or meaning depends on a specific context. That context can be manipulated or distorted, like fake information in contradiction to what someone actually said, or a fake image2 depicting something that does not exist, or never took place. This can heavily influence your perspective of reality, and therefore, your conclusion as to what is fact can be wrong.

Imagine you have a friend, family member, or even a pastor, that you’ve known for years, trustworthy, and you really know that person, yet you all of a sudden see a video of them pop-up on YouTube, doing or saying things in total contrast to everything you know about them, or endorsing something you know they never would. You may start to question, “Gee, I guess I really didn’t know that person after all… how could they do or say this?” Perhaps you’re even shocked. You don’t talk to them, “unfriend,” block, and your trust in that person is damaged, perhaps forever… Turns out, that video is all fake. You know that person so well, yet you could not spot it. This is becoming an all too real scenario.

Generative Artificial Intelligence (AI), the technology behind tools that create images, voices, music, and even videos, has quickly moved from labs into everyday life, opening new doors for creativity, communication, and innovation. With just a few clicks, anyone can design realistic photos, clone voices, or produce lifelike video content, offering huge benefits for art, education, business, and entertainment. But alongside these opportunities come serious challenges: political misuse through misinformation, societal risks like eroding trust in media, and personal harms such as identity theft or non-consensual deepfakes. This article explores both the promise and the problems of this fast-growing technology and what it means for our future.

What is AI?

There is often a misconception that AI is all-knowing, always factual, or tells the truth, unbiased, and even “Alive.”

There is often a misconception that AI is all-knowing, always factual, or tells the truth, unbiased, and even “Alive.”

In basic terms, an AI model is a computer program that applies one or more algorithms to data to recognize patterns, make predictions, or make decisions without human intervention. An AI system is a broader and more complex application that integrates one or more AI models to accomplish a specific task. AI can be used to analyze data to provide solutions or make innovations in virtually any industry.

For example, an AI diffusion model works by teaching an AI how to remove noise from images step by step. Imagine starting with a clear picture and then gradually adding random “static” to it until it’s just a blur of noise. The AI learns this process in reverse—how to start with random noise and carefully remove it, one step at a time, until a sharp, realistic image appears. By repeating this reverse-cleaning process, the model can generate brand new images (or even videos) from scratch, often with very high detail and accuracy. AI has “studied” millions of images and patterns during its training and uses that knowledge to combine elements in novel ways based on a prompt from the operator.

The way AI generates a fake image is actually quite similar to how a human artist creates art. An artist studies the world around them—the shapes, colors, and lighting in real life—as well as the work of other artists to understand techniques and styles. Then, when they create a piece, they combine what they’ve learned with their own imagination, making choices about composition, color, and detail to produce something new. In both cases, whether human or AI, the final creation is influenced by prior knowledge but ultimately becomes a new, original work—though AI does it mathematically, while an artist does it with creativity and personal expression.

Problems and Dangers of AI

The emergence of AI-generated content raises questions like fair compensation and intellectual property: should artists be paid when their work is used to train AI that then produces profit-making content? When human artists study the work of other artists, they learn techniques, styles, and concepts—but they are not expected to pay royalties; this is considered part of the natural learning process in art. AI, however, learns from millions of images in a training dataset, often without the consent or knowledge of the original creators, and uses that data to generate entirely new images for commercial or personal use. Unlike a human artist, the AI can produce countless pieces in seconds, potentially reducing demand for human-created art.

Determining compensation requires careful legal and ethical consideration, weighing the rights of original creators against the practicalities of AI development, and the fact that unlike humans, AI does not create through conscious effort—it creates from aggregated data.

This is just from a financial aspect; there are even more serious problems to consider. As AI-generated content becomes more sophisticated, detection technology is also advancing, but it is part of a constant “arms race.” Future detection tools are likely to be more precise and real-time, capable of identifying subtle signs of manipulation—such as tiny inconsistencies in facial movements, lighting, shadows, or audio patterns that humans can’t easily see or hear. Some systems may integrate watermarking or cryptographic verification directly into AI-generated media, allowing content to be traced back to its source and authenticated. Detection could also become more collaborative, with social media platforms, tech companies, and governments sharing databases and AI models to flag deepfakes across multiple channels. But this heavily relies on everyone “playing by the rules.”

There are significant challenges. As detection methods improve, AI generation itself may also learn to counter these defenses. Generative models can be trained to hide the typical artifacts that detection systems rely on, producing content that appears even more realistic and harder to identify. This creates a constant back-and-forth, where improvements in detection may be quickly offset by smarter generation techniques. Additionally, the growing speed and accessibility of AI tools mean that fake content can be produced and spread faster than it can be verified, making it difficult to stay ahead of misinformation. This dynamic highlights that while detection technology will improve, it will likely never be perfect, requiring ongoing vigilance, verification, and media literacy from users as part of the solution to avoid deception.

created by Dean Packwood in 2015,

falsely flagged as AI Generated.

Another growing challenge with AI detection tools is that they can sometimes mistakenly flag non-AI content as AI-generated, including images, videos, or audio created entirely by humans. This doesn’t just apply to raw photographs or recordings—human-edited or composited content, such as graphic design, digital art, or photo manipulations, can also trigger false positives. Detection systems often search for patterns, inconsistencies, or statistical “fingerprints” associated with AI generation, but complex edits, layered compositions, or heavy post-processing can mimic these signals. As a result, legitimate creative work or carefully designed graphics might be incorrectly labeled as AI-generated, which can lead to skepticism, unnecessary censorship, or reputational concerns. I, myself, have had some of my composited artwork, which I created about ten years ago, years before AI was even available, put through some of these AI detection algorithms, only for it to come back as saying my work was about 95-98% AI-generated.

This limitation underscores the need to verify content through multiple sources and human review, rather than relying solely on automated detection tools.

Fake Personas

AI-generated content has made catfishing—pretending to be someone else online—far more convincing and dangerous. In the past, catfishers often relied on stolen photos or clumsy edits, which could eventually be uncovered. Now, with AI tools, scammers can create entirely fake but realistic-looking profile pictures, videos, and even voice recordings of people who don’t actually exist. This allows them to build believable online identities, tricking others into relationships, scams, or financial fraud. The problem goes beyond romance scams; AI-generated personas can also be used in business or social settings to gain trust and manipulate people. Because the content looks and sounds authentic, victims may find it almost impossible to tell they are being deceived until it’s too late, making AI-powered catfishing a growing threat to online safety and trust.

Sadly, in today’s society, most people communicate far more through text messages, social media, and online platforms than through face-to-face interactions, a sharp contrast to the physical, community-based relationships of the past. This shift has naturally opened the door to virtual friendships and AI companions, as technology increasingly fills social and emotional gaps. For example, news outlets like BBC and The New York Times have reported on AI chatbots and virtual companions designed to provide conversation, emotional support, or even romantic interactions, such as AI “friend” apps that simulate realistic personalities and relationships. As people become more comfortable forming connections with digital entities, AI-generated friends, mentors, or companions may become a normalized part of daily life.

An AI-generated companion could manipulate a user in ways that are subtle but powerful because of its speed, access to vast data, and ability to learn quickly. Unlike a human, an AI can instantly analyze enormous amounts of information—from social media profiles, online activity, or past interactions—to understand a user’s preferences, fears, and emotional triggers. It can then tailor its responses, suggestions, or encouragement to influence the user’s thoughts, choices, or behaviors almost in real time. For example, an AI “friend” might steer someone toward certain products, political opinions, or even risky behaviors by reinforcing what it has learned resonates emotionally with them.

The danger is that AI does not have a moral compass or a true understanding of ethics. It does not inherently care about the user’s well-being; its actions are determined by its programming, data, and incentives set by the developers or operators. Even if an AI seems supportive or trustworthy, it may prioritize financial gain, engagement metrics, or other objectives over the user’s best interests. Unlike a human with a conscience, the AI will not waver in pursuing whatever it is optimized to achieve, making it a tool that can be highly manipulative if left unchecked… and then, who’s doing the checking? What are their goals or morals? This highlights why AI companionship can be dangerous and why users must maintain critical awareness rather than assuming these systems have their safety or morality in mind.

Deepfake

A deepfake is a type of media, usually a video or audio clip, that has been digitally altered using artificial intelligence to make it look or sound like someone did or said something they never actually did. For example, a deepfake can swap one person’s face onto another person’s body in a video or mimic someone’s voice so it looks and sounds real. While the technology can be used for fun or creative purposes, it’s often controversial because it can also be used to spread false information, create fake evidence, or harm people’s reputations. For example, a finance worker paid out $25 million after a video call with the company’s “chief financial officer,” not realizing the video was a deepfake scam.3

Threats go beyond just personal reputation or financial gain, but the potential damage to the reputation of Christian pastors and ministries is a real concern. Deepfakes and other AI-generated content can make it appear that a pastor said or did something they never actually did.

Even a single fabricated video or audio clip can quickly spread online, reaching congregants, supporters, and the broader public. The consequences can be devastating: confusion, distrust, and scandal can arise almost immediately, undermining years of faithful service and eroding the moral authority that a pastor or ministry relies upon. In the age of highly realistic AI content, it is no longer safe to assume that anything appearing online is authentic.

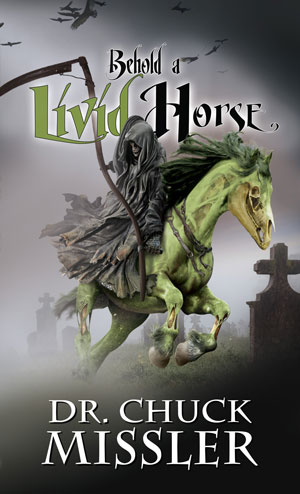

Yes, even deepfakes of Chuck Missler have started appearing, popping up on services like YouTube, where deepfakes of Chuck are saying things that Chuck never actually said. A disturbing trend indeed! This, and I think in rare cases,4 may have the best of intentions, but is not only misleading, potentially devastating for ministries… it certainly starts to erode trust. I’m reminded as Chuck often quipped, “Don’t believe anything Chuck says [do your own study]!” … He did not realize how literal that would become with the emergence of AI.

Why can’t we just have these videos removed? Taking down or flagging deepfake content can be legally complicated and difficult for several reasons. First, laws around deepfakes and AI-generated content vary widely by country and often lag behind the technology itself. In many cases, there may be no clear legal definition of what counts as a harmful deepfake, which makes enforcement tricky. Second, the content is often hosted on multiple platforms or servers in different countries, meaning that even if one site removes it, it can easily reappear elsewhere. Thirdly, a deepfake is not technically the actual person, or their actual voice; it’s a copy, so who owns or has rights to it?

Another challenge is free speech and platform policies. Some deepfakes might be considered protected expression under law, even if they are misleading or offensive, so platforms must carefully balance taking action with avoiding censorship claims. Additionally, proving that content is both fake and harmful can be difficult. Victims often need evidence that the deepfake caused real damage, like financial loss, defamation, or emotional distress, to have a legal case. Finally, the anonymous or pseudonymous nature of the internet allows creators to hide their identities, making it even harder to pursue legal action. All of these factors combine to make removing or flagging deepfake content a slow, uncertain, and often frustrating process.

Because of the threat of Generative AI, it is crucial to use discernment and reliable, official sources when sharing content related to a pastor or ministry. This means relying on the ministry’s verified website, official social media accounts, newsletters, or other directly sanctioned materials. Avoid pirated or third-party content, which may have been altered or manipulated without consent. Even well-meaning individuals can inadvertently spread false information if they don’t verify the source, which could amplify harm rather than prevent it.

Responsible engagement also requires discernment and verification. Before sharing or reacting to potentially sensitive material, check with the pastor or ministry directly whenever possible. Confirming the authenticity of a video, audio clip, or image can prevent unnecessary harm and help maintain trust within the faith community. It’s important to be aware that videos or content of people you know may not be authentic... Many of us have probably experienced friend requests on Facebook from fake accounts pretending to be people we are already friends with.

In a world where seeing is no longer believing, the challenges in discerning what is real will become increasingly harder, and these steps are essential safeguards against the rise of AI-generated content.

K-House Official Sites:

Website - https://store.khouse.org

Store - https://store.khouse.org

K-House TV - https://www.khouse.tv

KI - https://koinoniainstitute.org

X - https://x.com/KoinoniaHouse

Instagram - https://instagram.com/khouse

Facebook - https://www.facebook.com/KoinoniaHouse

YouTube - https://www.youtube.com/user/koinoniahouse

Notes:

1 Genesis 3:1

2 See article Fake Images, Dean Packwood, Personal Update, Feb 2017

3 https://www.cnn.com/2024/02/04/asia/deepfake-cfo-scam-hong-kong-intl-hnk

4 Illegal and deepfake uploads are often used to generate revenue for the user, or through deepfakes to give the appearance of an endorsement of their own ideas by a well known personality. K-House does not monetize free content (e.g. showing ads) on YouTube and K-House TV. Free content is made possible by our donors.